I am the Sr. Design Researcher for a company called “General Electronics Co.” who has recently been overwhelmed with people calling customer service about their products being late or not delivered. General Electronics Co. has decided to solve for how to get customers to use its website to find out information versus calling the customer service line for home deliveries and installation related needs. The company has a strong online and physical store presence in the United States, with lagging app adoption. The ultimate goal for this project is a fully online self-service capability for customers to interact with.

My "UX Research Proposal" should contain my strategy on how I would go about understanding the research ask, planning for the research, and presenting my findings. My presentation should also address the research goals, how I identify and prioritize those goals, and a detailed walkthrough of a proposed research plan including methodology, timeline, and analysis plan.

User experience is all about catering to the design of a product or service to fit the needs of users. User experience research is all about thoroughly understanding who is involved with what you're building, their needs, goals, and the context in which they'll interact with your product, how well you're serving their needs, and, uncovering opportunities to create something that better fits their needs. Research helps inform design decisions and ensure that a business is meeting and exceeding the expectations of users.

There are three major ways that UX research can help to solve how to get customers to use this specific website or app to find out information versus calling the customer service line for home deliveries and installation-related needs.

- Saving development and also process costs

- Increasing customer happiness and loyalty

- Uncovering opportunities to earn even more

One of the largest motivations for our client to invest in user experience research is that it can help save development and process costs. Uncovering the true needs of users means that they better understand what solutions will work for them, so they never waste development time on features that won't be valuable to them, and they would account for requirements from the beginning. I often summarize this kind of research as making sure you're building the right thing.

Having a clear goal from the beginning of a project allows decision making to happen faster and avoids the possibility of rework. Many studies show that code changes become increasingly expensive to implement, the later in the development process they happen. Some estimate that changes made after deployment is up to 100 times more expensive than fixing during the design stage. It's definitely worth investing in and making sure that they're building the right solution for customers.

Additionally, we can perform research throughout the course of development to ensure that they're implementing the solution in a way that is easy to use. Sometimes we call this building it right. Iterative testing also helps them uncover the changing needs of customers so that they can pivot if necessary.

We as UX researchers might also be able to identify and help address internal solutions that are costing the company money. For instance, if a researcher performs interviews and uncovers that users of an online tool are constantly reaching out to support call centers because they don't understand how something works, they could suggest contextual help or a new way to present the information that is more clear to users, so that they don't call in. The company would then save money by not having to have as many call center representatives. A UX researcher could apply that same methodology to internal tools, such as the contact management system that a call center representative uses, and they could recommend changes that would save each call center representative a few moments per transaction, which over time adds up to significant savings. In general, the digital services' final processing fees would definitely be less than hiring customer service specialists who should process customer requests on a phone line.

Study after study shows that users are willing to leave a website or application if they have trouble finding what they're looking for, or they run into a usability problem. That may be why their customers are not using their digital service as a website. This company can use UX research to ensure that it is easy for their customers to perform key actions, such as using their website to find out information, versus calling the customer service line for home deliveries and installation-related needs. Ensuring ease of use means it's also less likely that customers will go to a competitor for the same service. To truly create passionate users, we need to go above and beyond and anticipate and serve the users' needs.

User experience research can help our client uncover opportunities for improvements or new features and functions that this company wouldn't have otherwise known about, but can help them provide considerable value to their users. Connecting ongoing usability tests, interviews, and ethnographic studies on existing products, allows our clients to understand how their users are actually interacting with their product or service. This allows them to see both what is resonating well, and what could be better about their product, in the context that it really gets used. I think, we can also evaluate their competitors just like we evaluate their own digital products, which can give our client another perspective on what functions, users find valuable, or understand weaknesses that they can improve on.

For choosing the proper research methodology, I have to get the proper information.

The first thing I need to consider is the organizational structure and development culture of General Electronics Co. Have they utilized Waterfall project management or Agile?

Many companies are moving toward the trend of utilizing Agile-like development methodologies and I assume General Electronics do the same, so it's helpful to understand the limitations.

In an Agile environment, the whole team gets started designing and building right away. I don't usually have the luxury of time to deeply explore users' needs and goals before anyone starts. Even if I do get to work ahead of a sprint or two, I won't be able to be as thorough as I would in a Waterfall requirements phase, but the benefit of this Agile methodology is that I can keep doing small chunks of research in each sprint.

The other most important step to determining research methodology is to examine what stage in the “product development life cycle” the client is in.

At a very high level, they are typically in one of three product stages.

- Strategizing something brand new

- Actively designing or building

- Assessing the performance of something that's live.

When I am strategizing about something new, whether it's a completely new service or a new feature of legacy software, I need to focus my research on uncovering “users' needs and goals” based on the Human-Centered Design guidelines, finding any areas for improvement from users' existing solutions, and validating that my idea serves users in some unique and meaningful way.

During the active development stage, I am going to be actively trying to answer whether UX designers and developers building something right.

I'll use the information I collect during this period to inform, design, and development decisions to optimize performance and help set priority.

When we have a digital product or service that is live, I'll focus my assessments on summarizing trends and uncovering opportunities.

In general, there is no one formula for selecting the best method at any given time, but understanding the stage of product development can help me narrow down my goals and the time constraints I am working under, which can help guide my decision.

Once I've determined the high-level product phase, I'll examine the exact questions I'm trying to answer with each particular study. The more specific my goal, the easier it will be to figure out what methodology to employ. Also if I have multiple research goals, I would combine elements of different research methods to efficiently answer my questions.

In this research project with General Electronics, I recommend starting the project by "assessing the performance of their existing website". I'm going to utilize quantitative behavioral research techniques, like usage analytics to understand trends, traits, and pain points of the website, and qualitative methods, such as usability testing competitors to uncover opportunities in a business space. After analyzing the data, I'll need to tie all of the individual pieces of data together to create meaningful, actionable insights. it's the onus of me as the user experience researcher to not just report back facts, but also synthesize data into information the team can use.

I can also always strategize for adding a brand new feature to the website. To determine users' goals and motivations, I focus on utilizing qualitative attitudinal methods, such as interviews. To understand their frustrations or any gaps in service with existing solutions, I utilize more behavioral methods like a moderated usability test. Finally, to validate ideas, I may want to use a mix of qualitative and quantitative methods, like a survey, to get a sense of both scale and some additional context about their needs.

In future sprints, during the active development stage, I am going to be actively trying to answer whether UX designers and developers building something right. I'll use the information I collect during this period to inform, design and development decisions to optimize performance and help set priority. I'll typically perform mostly behavioral research methods like card sorts, task-based usability tests, and AB tests to inform these decisions. It can also be helpful to add some attitudinal methods, like desirability studies if we have more time.

Once a round of research is complete, I'll need to know how to interpret the data and make recommendations for the rest of the team. I'll need to tie all of the individual pieces of data together to create meaningful, actionable insights. Each methodology has particular data analysis procedures. For instance, I can't necessarily analyze quantitative survey data, in the same way, that I would moderate interview notes. However, it's my duty as a UX researcher to not just report back facts, but also synthesize data into information the team can use.

Depending on the phase and type of research, that might take the form of personas, recommended changes to an interface, or even hypotheses for the next round of research.

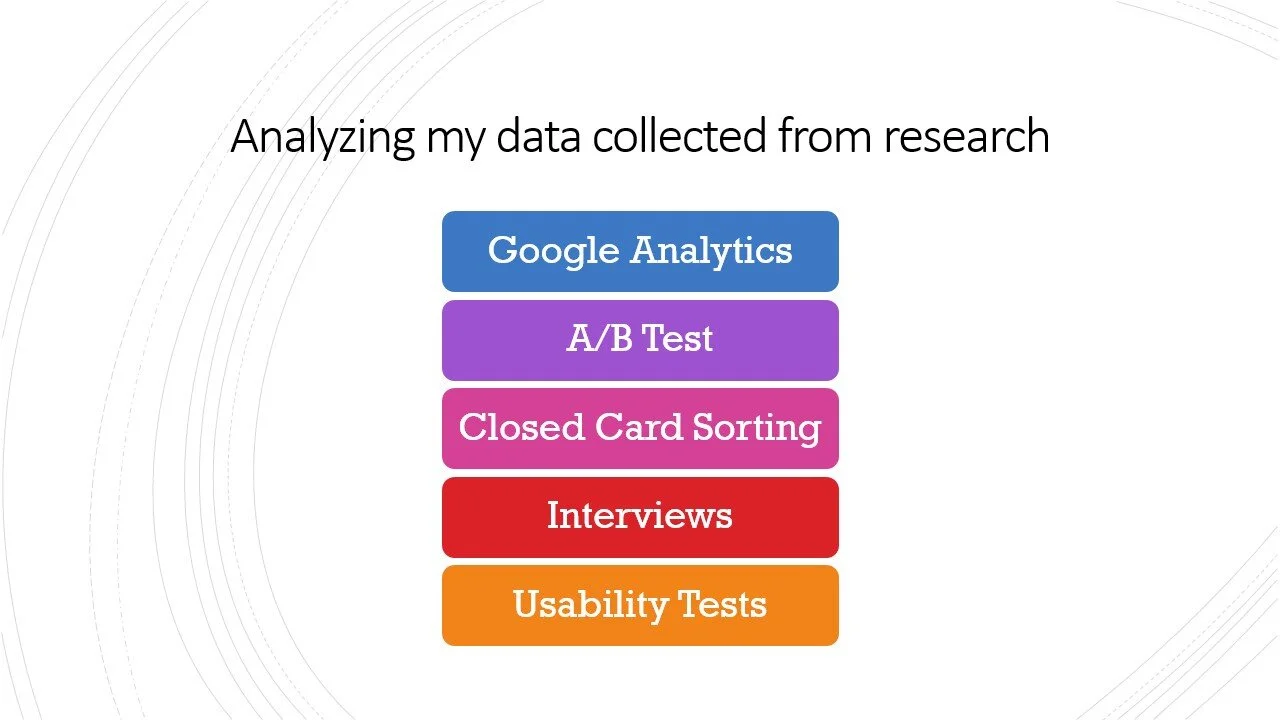

The first thing I need to do after finishing the study is to gather and organize all the data.

I would export the raw data of my Google Analytics, A/B test, and closed card sorting. Most quantitative tools have simple analysis and charting functions that I can use. I just need to make sure that I look at the full scope of data before I make any conclusions.

About my moderated research including interviews and usability tests, I would have a combination of hand-written and typed notes from a variety of people, digital audio and video files, and papers filled out by the participants. When analyzing moderated research, I make a big spreadsheet with a row for every participant, Including their general demographic information, the notes from each session, and links to any other files or information, pertaining to the research. Having one big overview helps take a look at the big picture.

The next step is to start breaking down the huge amount of information, mining the notes for pertinent facts, quotes, or points, that relate to the key goals of my research. Let's say that I was looking through the notes of my moderated usability test of a new feature added to the General Electronics website, and I was testing users' reactions to navigation. I look through each participant's notes and notice that none of them were able to find the main menu the first time. That would be a key element to note. I recommend writing each of these individual findings on a single sticky note.

If we have team members observe sessions, I would plan to have this breakdown process occur in the debriefing section, with as much of the project team as possible. I would also remind each team member of the key goals and hypotheses of the research, and have everyone mine their own notes and write up the main things they observed. Including everyone helps to make sure that all parties are invested in the research process, and understand the full breadth of work. It also ensures that I don't miss any points of view.

If I'm analyzing data on my own, I create one set of takeaways for each participant, so I can spot trends across people. Once I've mined the notes for key points, the next step is to organize. I like to use one of the research methodologies, called card sorting, to help organize my own findings.

Typically, I find that it makes sense to do a closed card sort and make the main categories match the main goals of the research. Essentially, I look at each of the key points I and my team have identified and sort them into the predefined goal categories. For instance, if I was usability testing a new feature for ease of use, I might have had goals that sounded something like this: “Do users know how to enter their credentials? Do users understand the hamburger menu icon? Can users easily log out?” Given this, my finding categories would be Credentials, Hamburger Icon, and Logout.

I would also create another category for additional findings that don't directly correlate to my predefined goals. Throughout the card sorting process, I will be able to easily spot trends and potential anomalies. For instance, if every one of 10 participants had trouble finding something, it's very likely that there's a usability and findability issue that I need to address.

If I am able to do this with the team, I can take the time to discuss why each finding is important, what it means in the context of the product, and potential solutions or recommendations. Doing this process on my own can also help me think through the context, and crystallize findings into meaningful insights. If I am still having trouble understanding or articulating the context of my findings, I usually map the main takeaways across two main dimensions that are important to my project and examining the relationship of where the findings fall in the matrix. It would help me to have a better image!

It's not just enough to report back hard facts. I need to give my team a deep understanding of what I have found, and what it means in the full picture of the website.

Once I've analyzed my research and come up with the key takeaways and suggestions, my biggest task is to share my insights and ensure that my recommendations are actually implemented. After all, research doesn't matter if I don't use the information.

In this research, I am acting as a consultant or not fully embedded in a team. I need to write a formal report recording all the details of my methodology and findings. I create an executive summary of key information and findings because most people will not have time to read lengthy reports.

In the body of the report, include a mixture of visuals and text to appeal to the different ways that people best learn and interpret data.

For instance, if I created a matrix to distill my insights, I take a picture or create a digital version to include in the report. If I usability tested a prototype, take screenshots of the prototype, and highlight key areas of interest, or video clips of the participants actually interacting with it. I can also create a simple spreadsheet of findings and recommendations to give readers a quick way to visualize highlighted takeaways.

I'll also usually include any particular relevant or emotional quotes or screenshots of participants' faces if I can. In my report, I also link to the research plans, related sites, or summaries of previous research.

While a report is useful as a summary deliverable and to document findings, I don't let a report be my only method of sharing findings. I almost always schedule a whole team discussion of takeaways and their implications. It should take almost 30 minutes to go through each of the key insights I uncovered.

We discuss what I observed, why it matters to the team, and what the team should do.

As an example, if I found a key usability study in the sign-up flow of the delivery page of our client website, I wouldn't just say, "It's difficult for customers to enter their credit card information." Instead, I'd say something like this: "It's difficult for customers to enter their credit card information because we ask for the date in a format that doesn't match the way they see it on their card. Many participants get frustrated at this point and drop out, which results in abandoned sales and a large potential loss for the company. We should tweak the form so that the date selector matches what customers see on their own cards."

However, when I decide to document results, the most important thing I need to do with my data is to connect it to the broader context of the business. I also really need to help people understand why it matters, and what they should do about it.